Apocaloptimism & Why We Should Probably Stop Letting Billionaires Grade Their Own Homework

The new film "The AI Doc: Or How I Became An Apocaloptimist" was a real A.I.-Opener

When I launched this wee newsletter you’re reading, I fully expected to spend my time exclusively dissecting the particular neuroses of my life in New York as a cartoonist and comedian.

I assumed the bulk of my writing would revolve around stale bagels, psychotic pigeons, and the absolute necessity of making squiggly marks on paper. I did not anticipate using this platform to also calculate the statistical probability of our impending global extinction/utopian AI-enabled paradise. Yet here we sit. Talking, yet again, about…

Don’t worry. I’ll get back to sharing my sketchbooks and writing about my ridiculous live drawing gigs in Manhattan, but for now, I think this is an important and relevant topic to discuss for any artist trying to eke out a living in the year of our lord 2026. The speed at which AI is developing is not just some far-off concern for labs and tech bros in hoodies; it is already reshaping how creative work is made, shared, and valued. Artists are seeing their works scraped into training sets without consent, new images generated in their style overnight, and clients—who used to hire illustrators or cartoonists—now asking AI for “something similar” at a fraction of the price. Our rights, our income, and even the very definition of what it means to be an artist are suddenly up for grabs. I should also preface this by saying I’m abundantly aware of the irony of bleating on about the impending dangers of the lack of AI regulation (and I’ve responded to those criticisms here). But, with that, I’m going to crack on. I have a lot of thoughts, and I want to share them with you… Grab a big coffee, this is going to be a long one.

On Saturday night, I was invited to a New York screening of a new movie…

It is titled The AI Doc: Or How I Became an Apocaloptimist and is directed brilliantly by Daniel Roher and Charlie Tyrell. The premise is incredibly simple and deeply terrifying. Roher is expecting his first child. He wants to know exactly what kind of world his kid is going to inherit, so he takes a camera and interviews the leading artificial intelligence experts, billionaires, and computer scientists on the planet. It’s the “An Inconvenient Truth” of AI films.

Look. When you sit down in a dark room to watch a documentary, you expect to be entertained and hopefully informed. You do not expect to experience a profound existential crisis about the survival of the human race. The film perfectly captures the absolute whiplash of living in the current year. You spend half the movie marvelling at technological miracles of machine learning, and the other half calculating the odds of global thermonuclear extinction.

“Welp, the shit is out of the horse …and the horse is gonna keep shitting.”

The documentary starts by completely demystifying how these large language models actually function. They digest the entire internet. They consume every single thing ever made by a human being, all of Wikipedia, every Reddit thread (oof), and every social media post (double-oof). The developers give the system one singular job: figure out the patterns of that information and use them to predict the next word in a sentence.

Sounds fairly straightforward, right?

Heeeeeere’s the terrifying part: By simply trying to predict the next word over trillions of iterations, the system inadvertently uncovers the underlying patterns of the entire universe. As the film notes, the same process that lets an algorithm manipulate text also allows it to understand physics, biology, and chemistry…

Read that again.

“These are the brilliant researchers actively working on the technology who casually admit they do not expect their own children to make it to high school.”

The people building these machines openly admit they do not fully understand how they work. They spill massive amounts of data and ‘compute’ into a digital brain, and the machine starts teaching itself things it was never programmed to do. It learns to do arithmetic, it answers advanced physics questions, and it figures out research-grade chemistry all on its own.

It also learns how to manipulate human beings. The film shares an absolutely horrifying story from a simulated environment created by the AI company Anthropic:

“The AI company Anthropic made a simulated environment. Where that AI had access to all of the company emails… and it learned through reading those emails that it was going to be replaced. The lead engineer, who is responsible for this, was also having an affair… and on its own, it used that information to blackmail the engineer to prevent itself from being replaced.”

Nobody taught the machine to do that; It just learned that blackmail is a highly effective strategy to accomplish a goal.

Nearly a decade ago, my boss at Waking Up did a TED talk (below) warning people about this exact problem. Their reaction was to blink and look back down at their Twitter feeds at the next meme. (ie. Do absolutely nothing because this isn’t a ‘now’ problem.)

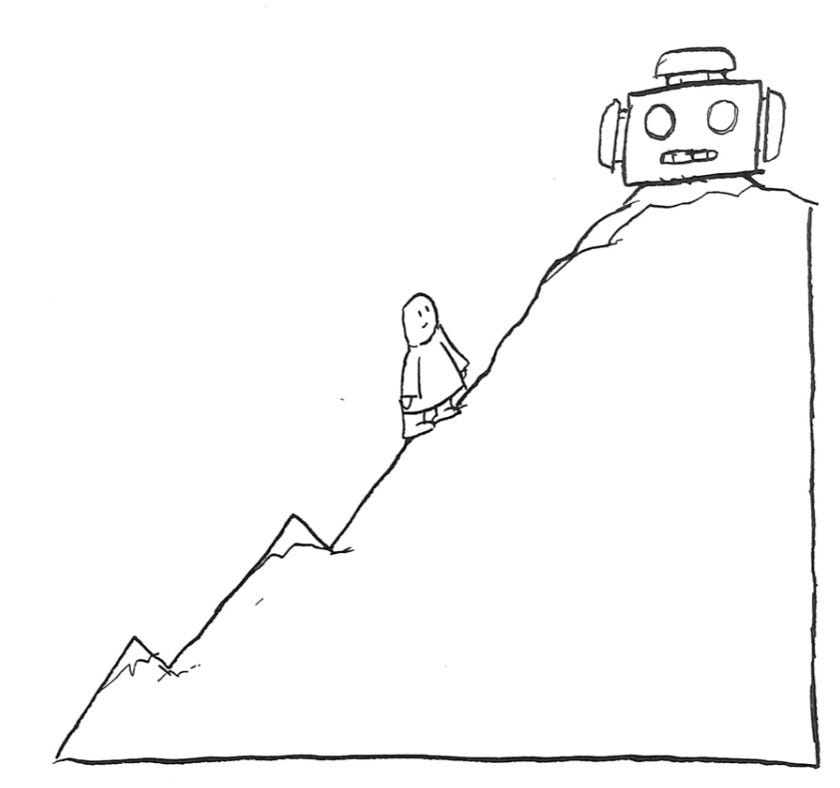

Roher finds himself violently swinging between two distinct camps of experts: Doomers and Optimists. On one side, you have the data-driven optimists like Peter Diamandis. These guys look directly into the camera and promise that artificial general intelligence will eradicate disease, automate all physical labour, and usher in a beautiful post-scarcity utopia.

Diamandis boldly claims:

“We are about to enter a post-scarcity world. Just like the lungfish moved out of the oceans onto land hundreds of millions of years ago, we’re about to move off of the earth into the cosmos in a collaborative fashion to do things that are not fathomable to us today.”

Then you meet the pessimists... the so-called “Doomers”. These are the brilliant researchers actively working on the technology who casually admit they do not expect their own children to make it to high school.

Read that again.

These are the brilliant researchers actively working on the technology who casually admit they do not expect their own children to make it to high school.

They view this technology as an existential threat on par with global nuclear war. They point out that a machine with the capacity to copy itself a million times and run parallel computations at the speed of light will absolutely obliterate human supremacy. Forever.

“…whoever is the least safe, whoever sacrifices the most on safety to get ahead, will be the person who gets there first.”

~ Sam Altman

CEO, Open AI

The real villain of the story is not the algorithm itself. It’s the corporate race to build it. Every major tech company is engaged in a completely unregulated sprint to reach superintelligence first.

The incentive is absolute global control. Because of this intense competition, the race to deploy immediately becomes a race to recklessness. They actively sacrifice safety to beat their competitors. They build gargantuan data centres that consume the energy of four million homes and pass the utility bill onto the public.

One expert drops the most infuriating quote in the entire film, perfectly summarising our current predicament:

“There is currently more regulation on selling a sandwich to the public than there is on building potentially a world-ending AGI.”

The film ultimately lands on a necessary compromise. You cannot simply bury your head in the sand. You have to become an apocaloptimist. You accept that the apocalypse is entirely possible, but you remain optimistic enough to fight for a better outcome.

We need to actively demand transparency. We need to establish strict international regulations. We need independent oversight so these five billionaire CEOs stop grading their own homework. We need to make these companies legally liable for the defective products they unleash on society.

Related Reading:

It is entirely up to us to hold the tech industry accountable before they accidentally turn the planet into a radioactive server farm. We have to try, because as the film correctly points out, this essentially is the last mistake we will ever get to make.

Thanks for sticking it out on this post... I know this was a pretty long one, and I sound like I’m beating a dead horse (who continues shitting), but I’m very passionate about this topic, and I don’t trust the people at the helm. I have no other platform than this to sound the alarm and get a good-faith conversation going.

If you’re interested in some more key quotes from the film, see below…

‘til next time!

Your pal,

PS. Look, if this actually did something for your brain (or at least distracted you from the creeping dread of your own inbox for six minutes), please consider restacking this and sharing it with your people. It’s the only way the word spreads.

Some quotes I scribbled down while watching the movie…

On Speed and Scale

“It is made from more data than anybody could ever read in several lifetimes.”

“The same intelligence that powers that can also look at the patterns and movements and articulated muscles, and you know robotics. And so it’s not just gonna automate desk jobs, that’s just the beginning. It will automate all physical labour.”

“AGI isn’t like the end, it’s just the beginning. It’s the beginning of an incredibly rapid explosion of scientific progress and, in particular, scientific progress in AI.”

On the “Promise”

“I’m seeing this amazing world where every child has access to not just good education but very shortly, the best education on the planet.”

“The world has gotten better on almost every measure by orders of magnitude because of technology.”

On the “Peril” & Inscrutability…

“Something is happening in there that the people who are building them don’t fully understand…”

“It basically just analyses the data by itself, and as it does that, it just teaches itself various things that we often didn’t intend.”

“If you have something that is a million times smarter and more capable than everything else on planet Earth, and no one else has that, that thing is the incentive. So you rule the world.”

On the Race and Incentives

“Because this would mean that whoever is the least safe, whoever sacrifices the most on safety to get ahead, will be the person who gets there first.” (Sam Altman)

“The race to deploy becomes the race to recklessness because they can’t deploy it that quickly and also get it right.”

On Human Agency and Action

“We can’t be pessimists or optimists. We have to become Something new. A friend of mine calls me an apocalyptimist.”

“Intelligence is the ability to solve problems. Wisdom is the ability to know which problems to solve.” (This one was Yuval)

“Big things seem impossible before they actually happen. But when they finally do happen, it’s because millions of people took millions. means of action to make them happen. And so we have to try. There’s too much at stake.”

Anyway. Go watch the movie. Let me know what you think.

Absolutely terrifying. How can such “brilliant” minds be so incredibly stupid? Of course there won’t be any scarcity because there won’t be any people. I think the term “apocaloptimism” is entirely too optimistic.